Joar questions

Trying to answer mentor's questions related to my definition of Agency.

https://drive.google.com/file/d/1Oo00uCbTv5mLxF1W6okQI_SH3foxACK8/view

Generating Candidate Definitions

1 Is “agenticity” a binary property, where a reward function is either agentic

or not, or is it a matter of degree (between 0 and 1, say)?

I think it would be strange to limit agency by any value, either from above or below.

I cannot imagine a completely non-agentic entity, but if agency implies planning, environment cognition, and resource acquisition, then it could well go into the negative: forming incorrect representations, wasting resources, or detrimental planning.

From personal experience, I would start with

2 Does the “agenticity” of a reward function depend on the environment dynamics (i.e., transition function), or is it (or should it be) invariant of the environment dynamics?

It is obvious to me that this is a quantity completely independent of the environment.

We can always find an environment where a function will not exhibit 'agentic' behavior, or where it will be maximally expressed. Nevertheless, this does not mean that the function itself is not agentic; it merely reflects the circumstances.

Accordingly, if we introduce a metric of agency for a function, we must ignore the specific characteristics of the environment; otherwise, we would merely be inventing a metric of environmental fitness.

Furthermore, I do not want a mesa-optimizer, which previously showed no agentic behavior, to seize control of the model in a specific situation and do something detrimental. Based on the deliberation about the practical application of the definition, I believe this is a reasonable requirement, and an environment-independent definition is thus more suitable here.

3 When determining if some reward function is “agentic” or not, it seems relevant whether or not an agent that maximises this reward function typically is guided by “immediate” concerns or “long-term” concerns. That is, does the agent need to plan ahead in order to do well according to the given reward function (and how much planning is required)?

Based on (2), an agent does not need to "succeed" in achieving its goal to be considered agentic; otherwise, we are simply defining a fitness function.

Regarding planning, I consider it more of an emergent property of agentic behavior rather than a cause or a reliable characteristic.

There can be situations that do not require planning, so, obviously, the discussion here is about the capacity for planning.

To answer this question, I would like to define the concept of 'planning' or at least 'structural behavior,' as this concept frequently arises in discussions within the SPAR group.

I like to think of the capacity for planning as an error in estimating the length of the loss trajectory in function space. This does not relate to the most efficient trajectory in any way. That is, I would prioritize not the ability to solve a task, but the ability to correctly assess one's own capabilities for solving it and to predict what tasks lie ahead and how much effort they will require. This would differentiate planning from a simple understanding of the environment.

In a way, this is a very industrial approach, but I haven't found more appealing definitions.

As for drawbacks, Ashe generally disliked it due to the perceived necessity of a global loss. I see no logic in this; the assessment skill always depends on the trajectory of some function, i.e., on a combination of environment assessment and one's own capabilities. The specific function does not matter.

Based on this definition:

- Loosely speaking, we can consider it good planning to understand that I have no idea how to solve a problem. The prediction is accurate, albeit useless.

- I can also consider it good planning to instantly find an optimal solution and subsequently calculate the length of a direct trajectory.

Again, it doesn't matter whether the agent found the global minimum or not; there isn't a strict dependence on KL here. There should also be a term responsible for predicting one's own capabilities. That is, I would say we separate

And it seems that, based on the premises of Active Inference, we find that an agent can plan, as it is literally part of KL, and it can do so to better understand the environment. However, strictly speaking, it is not obligated to plan, as the possibility remains to make

An empirical example of such an agent is a bacterium exhibiting chemotaxis. A bacterium is capable of detecting gradients of chemical substances in its environment using receptors on its surface. It effectively 'understands' whether the attractant concentration is increasing or decreasing as it moves. The bacterium does not plan its movements in any complex sense. It has no internal model of itself, its flagella, its swimming speed, or how its actions will affect its future position.

4 It also seems relevant how sensitive the agent is to perturbations of both the environment and of its own policy. That is, how bad would it be for the agent to sometimes take a random action, or have the environment sometimes change in a random way?

Well, from my definition it should depend on C + E. If change increases them, that's fine, otherwise not.

Joar's answer:

I can write a very large, complex function that will not possess a drive for planning, let alone for C/E. If computational complexity is meant, we can consider the Kolmogorov complexity of a function: in its general form, it is uncomputable. In terms of such complexity, a very long random function would possess it – I doubt that the longer a function is, the more agentic it becomes.

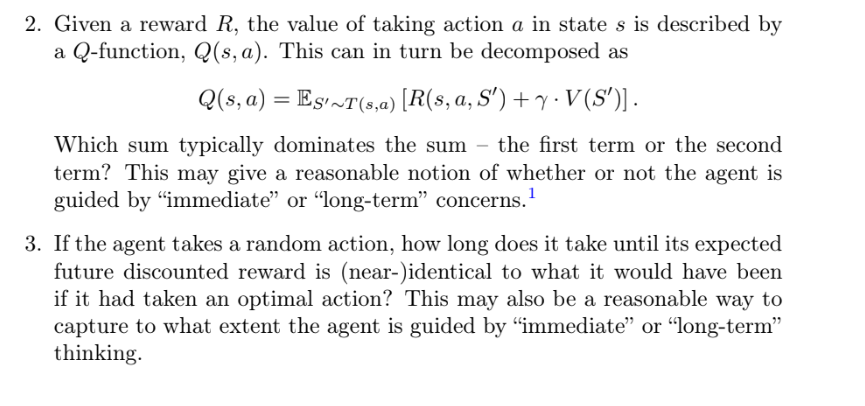

This definition is fundamental in the field of Reinforcement Learning (RL) and is known as the Bellman equation for the Q-function.

- R(s, a, S'): Immediate Reward. This is the reward an agent receives immediately after executing action

in state and transitioning to a new state . - γ · V(S'): Discounted Future Value. This is the expected value of the next state

, multiplied by the discount factor .

If, from the Q-definition, we assign an absolute value to future value, it might seem as if we are the best planner, but strictly speaking, this is not true, as it tells us nothing directly about the capacity for planning. I could pay no attention to the current state but place great emphasis on future states, while simultaneously having a completely incorrect understanding of the consequences of my actions.

If the loss simply explicitly includes an element responsible for the future, would that make the behavior include planning? Not necessarily.

An agent with a high discount factor highly values distant, large rewards. However, its policy might be extremely reactive: it simply moves towards the nearest goal marker, ignoring obstacles and not formulating plans. As a result, it constantly 'strives' for the future but cannot achieve it due to a lack of understanding of the consequences of its actions and an inability to plan.

4 Does the definition match our intuitive judgements for a few different example environments? That is, try to come up with a few simple environments and a few simple reward functions, some of which seem agentic, and some of which seem non-agentic. Does the formal definition match our intuitions?

I have tried to construct my definition based on the domain of application and empirical examples in response to each question.

5 Can you find or come up with any false positives or false negatives? That is, reward functions that intuitively seem agentic, but which do not fit the definition, or reward functions that intuitively seem non-agentic, but which do fit the definition?

Again, there are many limiting examples under these questions. Examples include an agent that 'worries' a lot but does nothing, or an agent that instantly achieves its goal. Many such examples exist.

6 Can you tell a story for why we should expect this definition to be a reasonable formalisation of agenticity?

- Defining agency through Active Inference allows for easily explaining situations where an entity we considered agentic performs actions contradicting its agency, through a mesa-optimizer's takeover.

- If we learn to measure the C/E functions within models and their individual components, we can effectively predict at what moments and under what conditions an agency shift will occur, and potentially prevent it. This is critically important for addressing the problem of mesa-optimizers and alignment in general.

- This definition tends towards refinement regarding C/E, yet in my own research, I have not been able to find any example of empirical agentic behavior or instrumental goal that cannot be expressed through them. This robustness in terms of a specific direction of refinement (as, for instance, with

) strikes me as a claim to uniqueness.

7 If two reward functions differ by (some combination of) positive linear scaling and potential shaping (Ng et al., 1999), then those reward functions induce the exact same preferences between all policies for all transition functions. It would therefore be reasonable for a definition of agenticity to be invariant to these two transformations. Is it?

If we were to apply linear transformations to individual components, such as

The fundamental principle underlying potential shaping is that it adds a constant to the total returned reward (or to the total loss function, if viewed as negative reward). Since adding a constant to the total function being minimized does not change the optimal policy, the property of agency, as defined by minimizing such a function, will remain invariant in this case too.

Thus, according to Ng, everything aligns.

8 In fact, for a given fixed transition function, two reward functions induce the same ordering of policies if and only if those reward functions differ by some combination of positive linear scaling, potential shaping, and “S ′ - redistribution” (Skalse and Abate, 2023). If the definition is given relative to an environment, then it seems reasonable that it should be invariant to these (and perhaps only to these) transformations of the reward function.

According to Skalse and Abate, S'-redistribution changes the individual reward values for transitioning from

Now let's consider the definition of agency:

S'-redistribution modifies the reward value received for transitioning to

Thus, S'-redistribution does not affect

This component completely depends on the environment's transition function (which defines the entropy

S'-redistribution, by definition, does not alter the transition function; therefore, it does not affect

Since

We have exhaustively reviewed a set of reward function transformations that preserve the optimal policy and found that the definition of agency is invariant to these changes. I believe this is a success.

Desirable Results

9 What fraction of all reward functions are agentic? That is, if we generate a random reward function, what is the probability of getting an agentic reward function? How does this depend on the environment dynamics?

In an infinite space, the chance of selecting a function from any given subset of all possible functions is 0. It is more appropriate here to use the concept of a measure from a finite set

If

In the context of your

where

The set of all agentic functions thus represents a linear combination of specific vectors

To introduce an even greater restriction and still define some measure, we can define a function

where

In this case, for each

If we consider the bounds

The measure of this "

Since

This value will be non-zero and will decrease as

This probability will be non-zero if

If the ratio of measures is at least 0.01 (which is quite substantial), then for

Of course, the environment was not considered here at all, and in certain environments, the emergence of agency is probably more preferential. However, if we consider an abstract agent in a vacuum, randomly chosen from the set of functions, we have defined a lower bound for the chance of its formation.

I got the green light to calculate limitations based on NN models, I'm happy.

10 What fraction of “human-written” reward functions are agentic? Based on some suitable repository of RL environments.

I suppose it's better to look from the perspective of theoretical RL functions. We could use STARC for comparison: https://arxiv.org/pdf/2309.15257.

IMO, it would be easier to describe if our RL functions turn out to strictly belong to a specific class of functions.

It's unlikely to be the case, but it's worth checking. Skylar Gu works on that, will knip for now.